Creative AI Evaluation: How a High School Student's Minecraft Challenge is Reshaping AI Benchmarking

In the rapidly evolving landscape of artificial intelligence, measuring the true capabilities of generative models remains one of the most significant challenges. Traditional benchmarks often fail to capture the nuanced abilities of these increasingly sophisticated systems. Enter a refreshing approach from an unexpected source: a high school student who has built a platform that pits AI models against each other in Minecraft building competitions, with humans acting as the judges.

This innovative approach, called MineBench, not only illustrates the creative potential of AI but also represents a shift toward more practical, intuitive evaluation methods that could have far-reaching implications for how we assess AI systems in the future.

MineBench: A New Paradigm in AI Evaluation

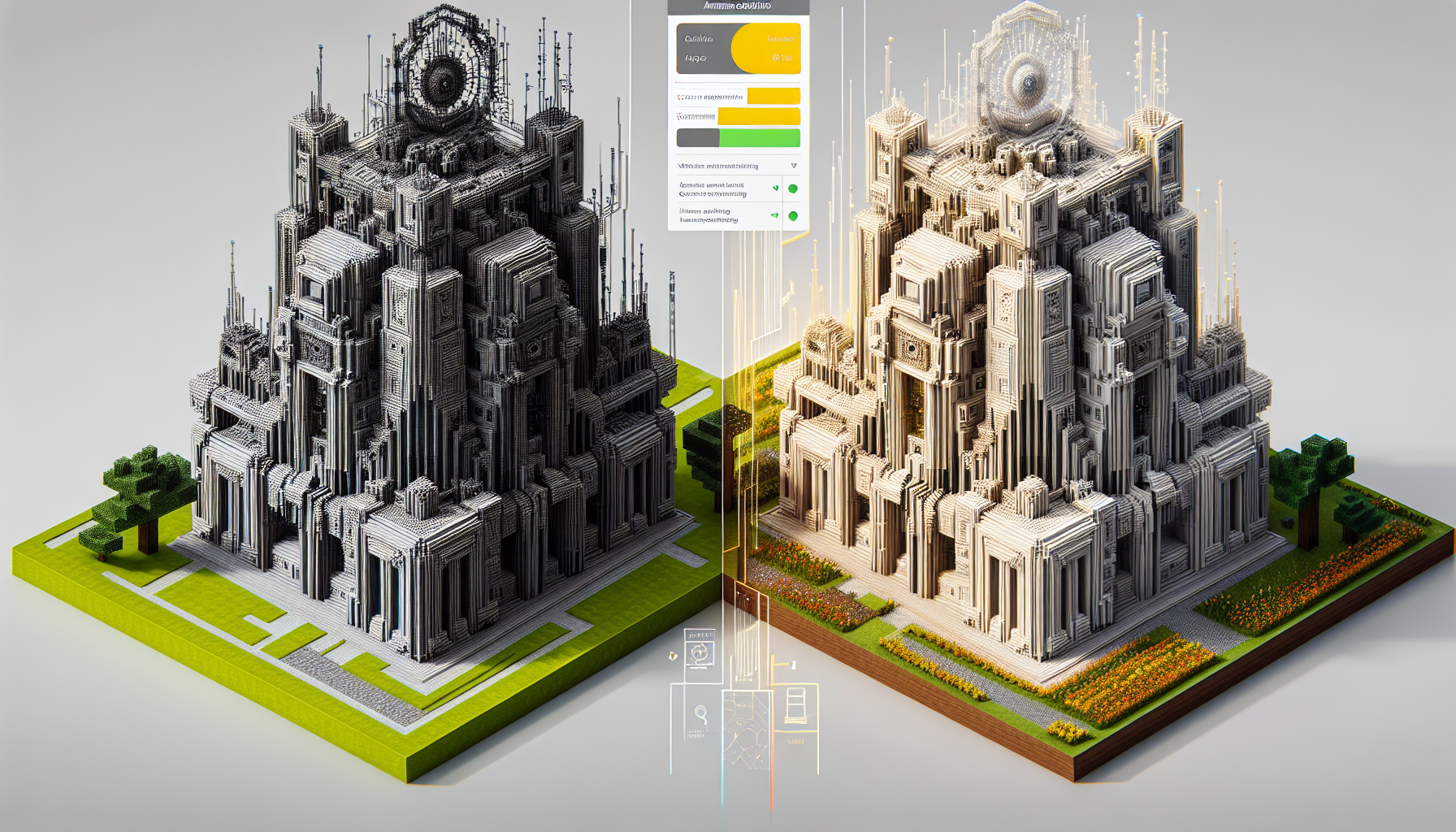

According to recent coverage from TechCrunch, a high school student has developed a website that allows users to challenge different AI models to create structures in Minecraft, the popular sandbox game known for its creative building mechanics. The platform then presents these AI-generated builds side by side, inviting human users to vote on which they believe demonstrates superior building skills.

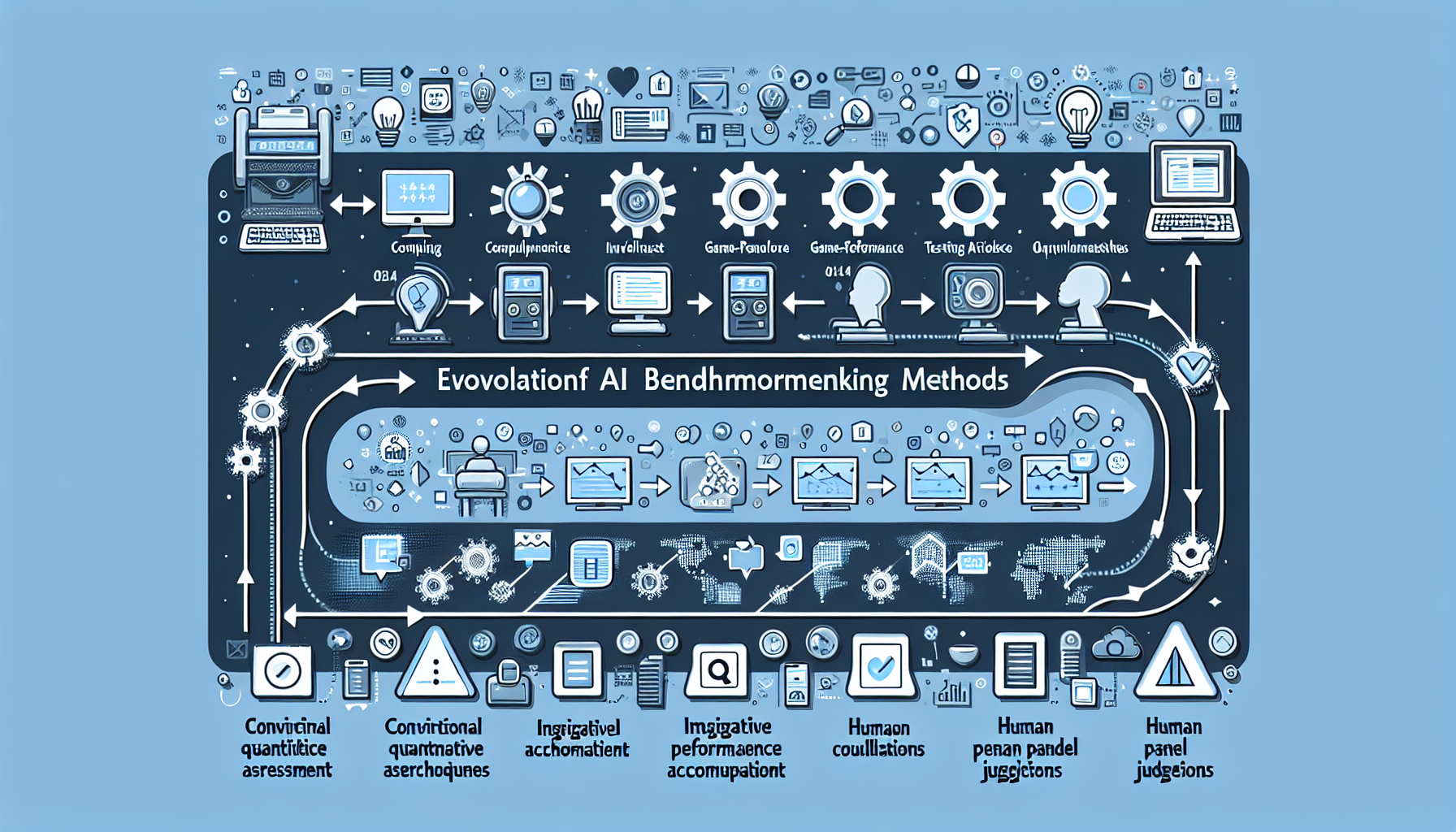

This evaluation approach stands in stark contrast to traditional AI benchmarking methods, which typically rely on quantitative metrics, standardized tests, or performance on narrowly defined tasks. While such metrics provide valuable data points, they often fail to capture more subjective qualities like creativity, aesthetic appeal, and adherence to cultural or contextual norms—all of which are essential aspects of truly advanced AI systems.

The genius of MineBench lies in its simplicity and accessibility. Minecraft provides a constrained but flexible environment where AI models must demonstrate spatial reasoning, aesthetic judgment, and an understanding of functional design. These are precisely the kinds of capabilities that are difficult to evaluate through traditional benchmarking frameworks but are immediately apparent to human judges.

The Technical Infrastructure Behind the Scenes

While the concept is straightforward, the technical implementation of MineBench represents a sophisticated integration of multiple technologies:

- API Integration: The platform likely connects to various AI models through their APIs, sending prompts and receiving build instructions.

- Minecraft Interfacing: Translating AI outputs into actual Minecraft builds requires a custom interface with the game's mechanics.

- Visualization Systems: The website needs to render the resulting builds in a browser-friendly format for comparison.

- Voting Mechanisms: A secure and fair voting system that aggregates user preferences while preventing manipulation.

- Data Collection: Behind the scenes, the platform presumably collects valuable data about which AI-generated designs humans prefer and why.

This infrastructure demonstrates how relatively simple front-end concepts can require sophisticated back-end engineering—a reminder that user-friendly innovation often rests on complex technical foundations.

Beyond Entertainment: Broader Implications for AI Evaluation

While MineBench might initially appear to be merely an entertaining way to compare AI models, its methodology has profound implications for the broader field of AI evaluation:

Human-centered evaluation: By placing human judgment at the center of the evaluation process, MineBench acknowledges that many AI applications ultimately aim to satisfy human preferences and needs. This approach recognizes that statistical performance doesn't always align with human satisfaction.

Domain-specific assessment: Rather than using generic metrics, this approach evaluates AI within a specific domain with clear success criteria. Such domain-specific benchmarking may provide more meaningful insights than general-purpose evaluations.

Democratized AI testing: By making AI evaluation accessible to non-experts, platforms like MineBench can collect a diverse range of perspectives on AI performance, potentially highlighting biases or preferences that might be missed in more controlled evaluations.

Interactive benchmarking: The competitive, side-by-side comparison format allows for direct evaluation of similar models, making relative strengths and weaknesses immediately apparent.

The Rise of Creative and Competitive AI Benchmarking

MineBench is not an isolated phenomenon but part of a growing trend toward more creative and competitive benchmarking approaches in the AI community. Other examples include:

- Competitions like the AI Art Challenge, where models compete to create artwork based on specific prompts

- AI Debate Platforms where language models engage in structured arguments evaluated by human judges

- Game-based AI competitions from chess and Go to more complex environments like StarCraft

- Turing test variations that focus on specific domains rather than general conversation

This trend reflects a maturation in how we think about AI capabilities—moving beyond simple metrics toward evaluations that capture the multifaceted nature of intelligence and creativity.

Educational Implications: Students as AI Innovators

Perhaps one of the most inspiring aspects of the MineBench story is that it was created by a high school student. This highlights how AI development and evaluation are becoming increasingly accessible to younger generations. Several factors contribute to this trend:

Simplified AI tools: The proliferation of user-friendly AI interfaces, APIs, and development platforms has lowered the technical barriers to entry.

Gaming as a gateway: Environments like Minecraft serve as familiar entry points for young people to engage with computational thinking and AI concepts.

Educational resources: The growing availability of AI education resources designed specifically for K-12 students has empowered younger developers to tackle sophisticated projects.

This democratization of AI development has profound implications for the future of the field, as it brings diverse perspectives and innovative approaches that might not emerge from traditional academic or industry settings.

Limitations and Challenges

Despite its innovative approach, MineBench and similar evaluation methods face several challenges:

Subjectivity concerns: Human judgment introduces subjectivity that can be influenced by cultural biases, aesthetic preferences, and other factors unrelated to objective AI capabilities.

Scale limitations: Collecting human evaluations is more time-consuming and expensive than automated metrics, potentially limiting the scope of such evaluations.

Domain specificity: While Minecraft building provides insights into certain AI capabilities, it doesn't necessarily predict performance in other domains.

Potential for gaming the system: As with any evaluation method, AI developers might eventually optimize their systems specifically for this type of benchmark rather than improving general capabilities.

These limitations suggest that platforms like MineBench should complement rather than replace more traditional evaluation methods, forming part of a comprehensive approach to understanding AI capabilities.

Relevance for Binbash Consulting Clients

For organizations working with Binbash Consulting, this development highlights several important considerations:

Evaluating AI solutions: When selecting AI systems for business applications, consider incorporating human judgment alongside technical metrics. This might involve having domain experts or end-users evaluate AI outputs in realistic scenarios rather than relying solely on benchmark figures.

Creative problem-solving: The MineBench approach demonstrates how familiar environments (like games) can be repurposed for novel technical applications. This kind of lateral thinking can be valuable when developing custom technical solutions for complex business problems.

Democratized development: The fact that a high school student created this platform serves as a reminder that innovative solutions can come from unexpected sources. Organizations might benefit from engaging with broader developer communities, including student hackathons or open innovation challenges.

User-centered metrics: When evaluating the success of deployed systems, consider implementing user satisfaction measures alongside technical performance metrics to capture the full impact of technology solutions.

At Binbash Consulting, we continue to monitor innovations in AI evaluation and development, helping our clients navigate the rapidly evolving technological landscape with solutions that balance technical excellence with practical effectiveness. The MineBench project serves as an inspiring example of how fresh perspectives can lead to valuable new approaches in technology assessment and implementation.

As AI becomes increasingly integrated into business operations and decision-making processes, understanding its capabilities and limitations through diverse evaluation methods will remain a critical aspect of responsible and effective AI adoption.